The Art of Experience Based Testing: Intuition Meets Quality Assurance

Experience based testing relies on skills, intuition, and lessons learned from past projects to find defects that scripted tests often miss. Closely related to exploratory testing experience based approaches, it focuses on learning, adapting, and testing simultaneously rather than following predefined steps. Instead of rigid test cases, testers use real-world knowledge about how software tends to break and how users actually behave.

Table of content

Experience-based testing helps teams test smarter and faster when exploring new features or hunting down stubborn bugs. This guide explains what experience based testing is, when to use it, and how to apply it in real projects, with ready-to-use examples and techniques.

What is experience based testing and why does it matter?

A tester runs through every scripted test case, everything passes, and the software ships. Two days later, users report a bug that seems obvious in hindsight. The test cases covered expected paths, but nobody thought to click that button twice in a row or paste an emoji into the search field.

This is where experience based testing fits in.

Experience based testing is an approach that relies on a tester’s experience, skill, intuition, and accumulated knowledge to design and execute tests. Rather than following rigid scripts, testers draw on their understanding of how software tends to break, what users actually do, and where defects typically hide.

The cook’s intuition: Adapting beyond the recipe

Think of it as the difference between following a recipe exactly and cooking by feel. A novice cook measures every ingredient precisely. An experienced cook knows when the sauce needs more salt just by looking at it. Both approaches work, but the experienced cook adapts when something unexpected happens.

Experience based testing complements structured approaches like equivalence partitioning or boundary value analysis. Those techniques follow predictable rules and produce consistent test cases. Experience based testing is different: ten testers might explore the same feature and find ten different bugs, because each brings unique knowledge to the task.

Why does this matter? Software defects don’t follow rules. They hide in unexpected places and emerge from unusual user behaviors. Structured testing catches predictable bugs. Experience based testing catches the rest.

When to use experience-based testing (and when to skip it)

Experience-based testing shines brightest in certain situations, but it’s not always the right tool for the job. Knowing when to reach for this approach, and when to rely on more structured methods, is part of becoming an effective tester.

Where experience-based testing excels

The approach works particularly well when documentation is thin or missing entirely. Many real-world projects lack detailed specifications, especially in fast-moving startups or during early development phases. When there’s no comprehensive requirements document to derive test cases from, experience becomes the primary guide.

Time pressure is another classic scenario. When a release deadline looms and there’s no time to write and execute hundreds of scripted test cases, exploratory sessions can cover ground quickly. A skilled tester can learn about the software, identify risks, and find critical bugs in a fraction of the time formal testing would require.

Early-stage exploration benefits enormously from this approach. Before writing detailed test cases, it helps to understand what the software actually does. Experience-based testing provides that initial reconnaissance, revealing how features work, where the rough edges are, and what areas deserve deeper attention.

Low-risk systems with limited complexity are also good candidates. Not every piece of software needs exhaustive formal software testing. For internal tools or features with minimal user impact, experience-based testing provides adequate coverage without excessive overhead.

Where structured testing is better

Contractual and regulatory requirements often demand documented test coverage. When a client or regulatory body needs to see exactly what was tested and how, experience-based testing alone won’t suffice. Auditors want matrices, traceability, and evidence.

Critical systems where failure carries severe consequences need systematic approaches. Medical devices, financial systems, safety-critical software: these demand rigorous, documented testing that can be reviewed, repeated, and verified.

Complex scenarios with many interacting variables benefit from structured techniques that ensure comprehensive coverage. Experience-based testing might miss edge cases that systematic approaches would catch.

The practical reality

Most testing combines both approaches. Structured techniques provide the foundation and ensure coverage of known requirements. Experience-based testing fills the gaps, catches unexpected issues, and brings human insight to the process. The question isn’t which approach to use, but how to balance them for each situation.

| Situation | Experience-based testing | Structured testing |

|---|---|---|

| Thin documentation | ✅ Primary approach | Limited application |

| Time pressure | ✅ Quick coverage | May be too slow |

| Early exploration | ✅ Ideal fit | Premature |

| Regulatory compliance | Supporting role | ✅ Required |

| Critical systems | Supplement only | ✅ Essential |

| Complex integration | Valuable addition | ✅ Systematic coverage |

Experience-based testing techniques

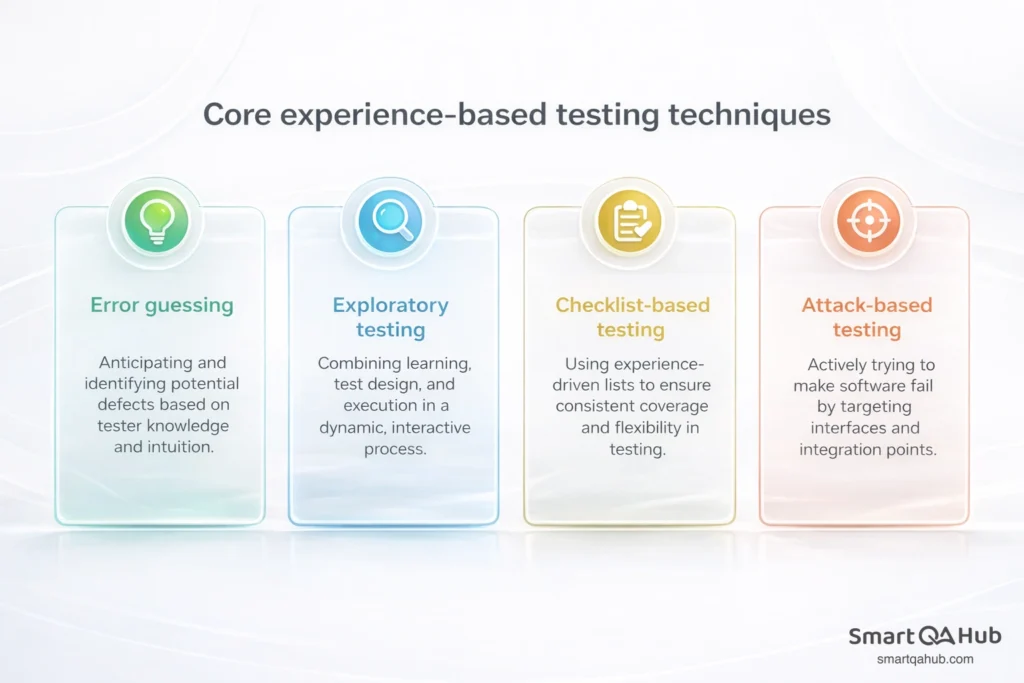

Experience-based testing isn’t a single method but a collection of approaches that leverage tester knowledge in different ways. Four techniques form the core toolkit, each suited to different situations and testing goals.

Error guessing

Error guessing in software testing is exactly what it sounds like: anticipating where defects are likely to hide based on knowledge and intuition. It’s the testing equivalent of a mechanic who hears an engine and immediately suspects the timing belt.

The technique draws on multiple knowledge sources. Past experience with the application reveals its weak spots. Understanding of common programming mistakes highlights likely error patterns. Familiarity with similar application systems suggests where problems typically occur.

A methodical approach improves error guessing effectiveness. Rather than random hunches, experienced testers build mental catalogues of error-prone areas: input validation, state transitions, integration points between components, error handling paths, and concurrent operations.

Pro tip: Here’s how error guessing might work in practice. A tester examining a login form doesn’t just verify that valid credentials work. They think: “What usually breaks here?” The mental checklist might include: SQL injection in the username field, extremely long inputs, empty submissions, rapid repeated attempts, special characters in passwords, and behavior when the session expires mid-login.

Exploratory testing

Exploratory testing combines learning, test design, and test execution into a single activity. Rather than separating these phases, the tester does all three simultaneously, using discoveries to guide the next steps.

Think of it as a conversation with the software. Each interaction reveals something new, which prompts the next question. Click a button, observe the result, form a hypothesis, test it, learn, repeat. The process is dynamic and responsive, adapting in real-time to what the software reveals.

Session-based exploratory testing adds structure to this fluid approach. Testing happens in defined time blocks, typically 60 to 90 minutes, guided by a charter that focuses the exploration. The charter specifies what to explore, what resources or techniques to use, and what information to look for.

Example:

A simple charter might read: “Explore the shopping cart functionality, focusing on edge cases around quantity changes and item removal, to discover usability issues and calculation errors.”

Exploratory testing works particularly well when specifications are incomplete, when learning a new system, when time doesn’t allow for formal test case development, or when supplementing scripted tests with human curiosity.

Checklist-based testing

Checklist-based testing uses experience-driven lists to guide the testing process. The checklist reminds testers what to examine, ensuring consistent coverage while still allowing flexibility in how each item is tested.

Difference between checklists and detailed test cases

Unlike detailed test cases with specific steps and expected results, checklists operate at a higher level. A checklist item might say “verify image upload” without specifying the exact images to upload, the sequence of steps, or the precise expected outcomes. The tester applies judgment about how to test each item.

Checklists emerge from accumulated experience. After testing similar features repeatedly, patterns become apparent. Image uploads, for instance, commonly need verification of: supported file formats, size limits, error handling for invalid files, upload progress indication, successful display after upload, and behavior when uploads fail mid-process.

Checklists also evolve. When a new issue surfaces, it goes on the list for future testing. When an item becomes obsolete, it comes off. The checklist becomes a living document that grows more valuable over time.

Attack-based testing

Attack-based testing takes an adversarial stance, actively trying to make the software fail. It’s closely related to negative testing but focuses specifically on security-related failures and interactions with external elements.

The approach targets interfaces and integration points: APIs, database connections, file systems, network communications, and operating system interactions. These boundaries between components often harbour vulnerabilities because they’re where assumptions break down and unexpected inputs appear.

The right questions to ask

Attack testing asks questions like: What happens if this API receives malformed data? Can database queries be manipulated through user input? Will the system handle unexpected file system states? How does it respond to network interruptions?

The technique requires thinking like an adversary. Rather than asking “does this work correctly?”, the question becomes “how could someone break this, intentionally or accidentally?” This mindset uncovers issues that normal testing might miss.

Experience based testing example: Putting it into practice

Theory becomes clearer with a concrete experience based testing example. Consider testing a contact form on a website, a common feature that seems simple but hides numerous potential issues.

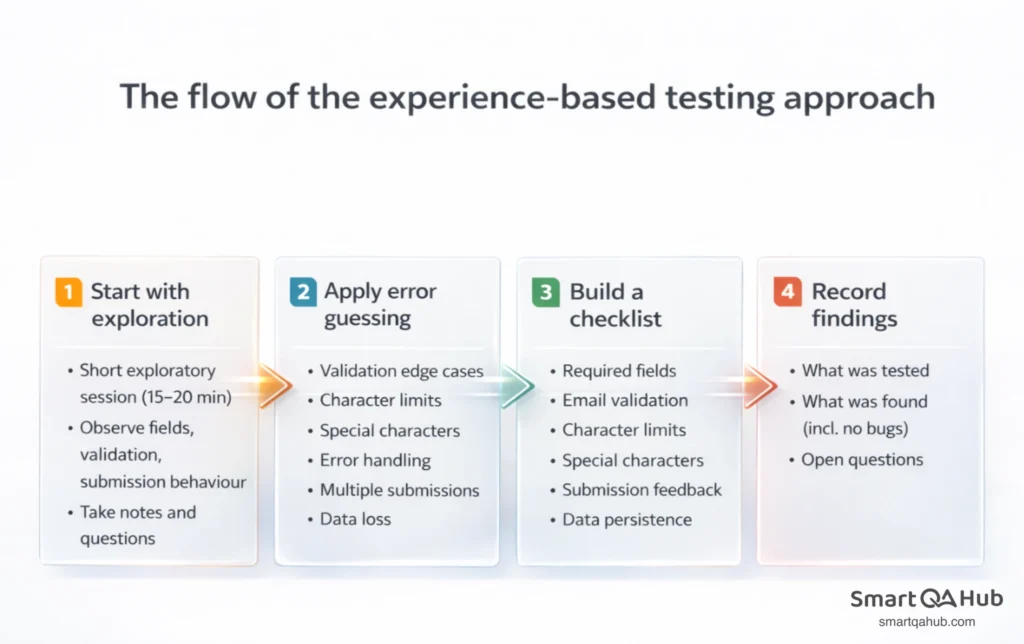

Starting with exploration

Rather than immediately writing test cases, begin with a short exploratory session. The goal is learning: what fields exist, what validation appears, what happens on submission, where the data goes. Spend 15 to 20 minutes just using the form, observing behaviour, and taking notes.

During exploration, questions emerge:

- Does the form validate email format?

- What’s the character limit on the message field?

- Does it handle special characters?

- What feedback appears after submission?

- How long does submission take?

Applying error guessing

Now apply experience to identify likely problem areas. Contact forms commonly fail in predictable ways:

- Email validation that’s too strict (rejecting valid addresses) or too loose (accepting invalid ones)

- Character limits that truncate messages without warning

- Special characters that cause display issues or security vulnerabilities

- Missing or unclear error messages

- Submission buttons that can be clicked multiple times

- Lost form data when validation fails

Each of these becomes a testing target.

Building a checklist

From exploration and error guessing, a checklist emerges for future testing:

| Area | Check |

|---|---|

| Required fields | Verify all mandatory fields enforce input |

| Email validation | Test valid edge cases and invalid formats |

| Character limits | Confirm limits and user feedback |

| Special characters | Test handling in all fields |

| Submission feedback | Verify success and error messages |

| Multiple submission | Test rapid clicking and page refresh |

| Data persistence | Check form state after validation errors |

Recording findings

Document what was tested and what was found. Even when no bugs appear, notes about tested areas prevent duplicate effort later.

A simple session log might read:

Session: Contact form exploration

Duration: 25 minutes

Charter: Explore contact form submission with focus on input validation and error handling

Findings:

- Email field accepts “test@test” without TLD – possible validation gap

- Message field truncates at 500 characters without warning

- Submit button remains active during submission – double-click sends duplicate

Questions for follow-up:

- What’s the expected maximum message length?

- Are duplicate submissions handled server-side?

Advantages and disadvantages to watch out for

Like any testing approach, experience-based testing has strengths and limitations. Understanding both helps apply the technique effectively.

Why experience-based testing works

Experience-based testing leverages human insight and adaptability to uncover issues that scripted approaches often miss.

Common mistakes to avoid

Used carelessly, experience-based testing can create blind spots instead of insights.

Experience based testing terminology

Error guessing: Anticipating defect locations based on tester knowledge and intuition about where bugs typically hide.

Exploratory testing: Simultaneous learning, test design, and test execution, with each activity informing the others in real-time.

Checklist-based testing: Using experience-derived lists to guide testing while allowing flexibility in execution.

Attack-based testing: Testing from an adversarial perspective, actively trying to make the software fail, particularly at integration points.

Test charter: A brief statement guiding an exploratory testing session, specifying what to explore and what information to seek.

Session-based testing: Time-boxed exploratory testing, typically 60-90 minutes, followed by a debrief to capture findings.

FAQ: Frequently asked questions about experience based testing

Can experience-based testing work without much testing experience?

How does experience-based testing fit with test automation?

Should test cases be written for findings from experience-based testing?

How much time should go to experience-based versus structured testing?

Experience is the tester’s secret weapon

Experience based testing transforms tester knowledge into a practical defect-finding tool. Rather than replacing structured approaches, it complements them, catching unexpected bugs that scripted tests miss. The four core techniques – error guessing, exploratory testing, checklist-based testing, and attack-based testing – each leverage experience differently, providing flexibility for various testing situations.

The approach builds skills with every session. Each bug found adds to the mental library. Each exploration reveals new patterns. Even those new to testing bring relevant experience that applies immediately. Next step: pick a feature and spend 20 focused minutes exploring it. Take notes. See what emerges.